In line with Reuters, OpenAI researchers warned the corporate’s board of administrators a couple of groundbreaking AI discovery simply days earlier than CEO Sam Altman’s sudden firing. The researchers expressed issues in regards to the potential dangers related to this highly effective know-how, urging the board to proceed with warning and develop clear pointers for its moral use.

The AI algorithm, reportedly named OpenAI Q-star, represents a leap ahead within the quest for synthetic common intelligence (AGI), a hypothetical AI able to surpassing human intelligence in most economically priceless duties.

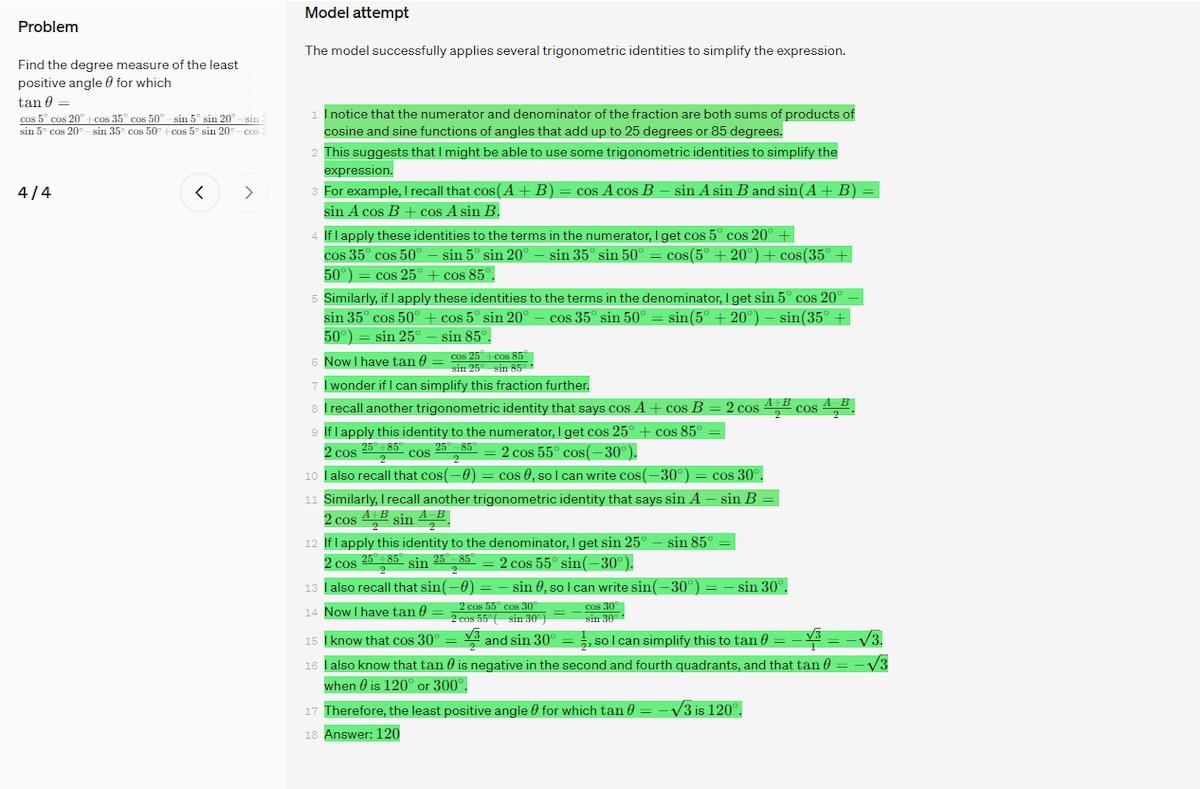

Researchers consider OpenAI Q-star’s capability to resolve mathematical issues, albeit at a grade-school stage, signifies its potential to develop reasoning capabilities akin to human intelligence.

Nevertheless, the researchers additionally highlighted the potential risks of such superior AI, citing long-standing issues amongst pc scientists about the potential for AI posing a menace to humanity. They emphasised the necessity for cautious consideration of the moral implications of this know-how and the significance of creating safeguards to stop its misuse.

OpenAI Q-star may need been within the works for a couple of months now

Sam Altman’s eventful firing, his cope with Microsoft, his comeback, and the substitute intelligence firm OpenAI shook the whole know-how world at the moment with OpenAI Q-star. To know what this technique is, we first want to know what an AGI is and what it will probably do.

Synthetic common intelligence (AGI), also referred to as robust AI or full AI, is a hypothetical kind of AI that may possess the flexibility to know and cause on the similar stage as a human being. AGI could be able to performing any mental activity {that a} human can, and would probably far surpass human capabilities in lots of areas.

Whereas AGI doesn’t but exist, many specialists believed that it’s only a matter of time earlier than it’s achieved. Some specialists, resembling Ray Kurzweil, consider that AGI may very well be achieved by 2045. Others, resembling Stuart Russell, consider that it’s extra more likely to take centuries.

Rowan Cheung, founding father of Rundown AI, posted on Twitter/X that Sam Altman stated in a speech the day earlier than he left OpenAI: “Is that this a instrument we have constructed or a creature we have now constructed?”.

A day earlier than Sam was fired, he gave this chilling speech:

“Is that this a instrument we have constructed or a creature we have now constructed?”

This timeline would add up with the latest discovery of Q*. pic.twitter.com/BnjcHsdSnl

— Rowan Cheung (@rowancheung) November 23, 2023

Though the OpenAI Q-star information got here out at the moment, let’s take you again in time.

On Might 31, 2023, OpenAI shared a weblog submit titled: “Bettering Mathematical Reasoning with Course of Supervision”.

Within the weblog submit, OpenAI described a new methodology for coaching giant language fashions to carry out mathematical downside fixing. This methodology, known as “course of supervision”, includes rewarding the mannequin for every right step in a chain-of-thought, fairly than simply for the proper last reply.

The authors of the article carried out a research to match course of supervision to the standard methodology of “end result supervision”. They discovered that course of supervision led to considerably higher efficiency on a benchmark dataset of mathematical issues.

If we glance again on the Reuters report, they are saying that they obtained the next statements from an nameless particular person associated to OpenAI: ”Some at OpenAI consider Q* (pronounced OpenAI Q-Star) may very well be a breakthrough within the startup’s seek for what’s often called synthetic common intelligence (AGI), advised one of many insiders. OpenAI defines AGI as autonomous techniques that surpass people in most economically priceless duties.

Given huge computing assets, the new mannequin was capable of resolve sure mathematical issues, the particular person stated on situation of anonymity as a result of the person was not licensed to talk on behalf of the corporate. Although solely performing math on the extent of grade-school college students, acing such checks made researchers very optimistic about Q*’s future success, the supply stated”.

We’re conscious that OpenAI’s analysis on course of supervision was accomplished utilizing GPT-4, however the “new mannequin was capable of resolve sure mathematical issues” talked about by the nameless one that spoke to Reuters is fairly just like the methodology utilized in previous analysis.

The place is the catch?

Let’s discuss why the whole tech world has reacted so strongly to OpenAI Q-star. I’m certain that you’ve seen the theme of synthetic intelligence taking on the world with its integration into human life in Sci-Fi motion pictures.

Hal-900 in The 2001 House Odyssey, Skynet in The Terminator, and Brokers in The Matrix are the best-known examples of this theme and the theme of those movies is that a man-made intelligence mannequin trains itself to return to the conclusion that humanity is dangerous to the world. That is one of many horrible outcomes of the AGI. We all know that AI fashions are these days being developed by coaching on a particular database, which raises all kinds of privateness issues.

As it’s possible you’ll recall, because of this alone, many international locations, resembling Italy, have banned the usage of ChatGPT inside their borders.

What if AI fashions might resolve advanced issues themselves with out the necessity for a database? Would it not be potential to foretell the end result and whether or not it might be helpful for us?

The regulation of AI has began to be talked about at this level, however nobody is aware of what sort of analysis corporations are doing behind closed doorways.

After all, we’re not saying that OpenAI Q-star would be the finish of humanity. However the uncertainty is a component that arouses worry in people as in each organic creature.

Maybe Sam Altman additionally had these query marks inside his head when he stated, “Is that this a instrument we have constructed or a creature we have now constructed”.

How in regards to the good ending?

Possibly there isn’t any must be so pessimistic and OpenAI Q-star can be one of many largest steps in the direction of a brighter future the world and the scientific neighborhood have ever taken. Probably, AGIs may very well be able to fixing issues that people can’t interpret or resolve.

Think about a scientist who by no means will get drained and might interpret issues analytically with 100% accuracy. How lengthy do you assume it should take this scientist to discover a resolution to most cancers, which has brought about hundreds of thousands of deaths over 1000’s of years, by discovering out why sharks do not undergo from most cancers?

What if all the issues on the planet resembling starvation, world warming, and soil air pollution had been not on our world’s neck once you get up tomorrow? Sure, a correctly functioning AGI can do all this too.

Possibly it’s too early to speak about all this and we’re simply being paranoid. Let’s go away all of it to time and hope for one of the best for all of us.

Thanks for studying..